Mangan Software Solutions releases SLM™ Version 2.5s15

Mangan Software Solutions releases SLM™ Version 2.5s15

A strategic partnership has been formed between AsInt, Inc. (AsInt) and Mangan Software Solutions (MSS). This relationship allows AsInt to deliver Mangan’s Safety Lifecycle Manager (SLM®) and subject matter expertise around Process, Functional, and Asset Safety Management as part of the SAP Master. AsInt’s Asset Integrityrelated apps will now include the business logic of Safety Lifecycle Manager (SLM®) developed by MSS to provide a single master data-driven dataset in the SAP Business Technology Platform (BTP). This approach removes data silos and points applications for the end users IT ecosystem, allowing operators to not only take advantage of cloud technologies within SAP but also, at the same time, provide a scalable enterprise approach to missioncritical data and information.

SLM® is known for providing comprehensive applications for handling the entire Safety Lifecycle end to end, covering analysis, detailed design & engineering, as well as safety performance monitoring and analytics. AsInt has established a strong presence providing Asset Integrity Apps, such as Thickness Management, Inspections, Risk Based Inspection, HCA, PHA, Etc., as native SAP Master Data-driven apps. This strategic relationship will allow

AsInt to deliver Mangan’s SLM® technology within the SAP Business Technology Platform (BTP). Combining this functionality with the core EAM (Enterprise Asset Management) capabilities of SAP will be the final step in providing a consolidated APM (Asset Performance Management) and EAM option for customers.

“MSS and AsInt are very excited to be able to introduce the discipline of Safety Lifecycle Management to the global SAP user base,” Steve Whiteside, President of Mangan Software, said. “Using MSS’s already proven SLM technology, both existing and future SAP customers will now have access to the highest standards of a process and functional safety solutions.”

Rohan Patel, Founder & CEO AsInt, Inc., stated, “By partnering with Mangan Software Solutions, AsInt can now offer a wide range of SAP customers access to Mangan’s award-winning flagship product, Safety Lifecycle Manager (SLM®), as an embedded solution and process in SAP. This is expected to enhance existing AsInt solutions and open new applications in various industries. We view Safety Lifecycle Management as an excellent addition to our growing SAP ecosystem.”

About AsInt:

AsInt is changing how people develop and deploy software for cross industries such as oil and gas, petrochemical, specialty chemical, railways, dairy, food & beverages, and Packaging. Our goal is to create a full suite of applications that meet a range of asset integrity needs regardless of your platform. But also making the software accessible via App stores and fit for purpose ease of use software. The solutions on the market today have begun to shift their focus to things like IIOT, big data, and analytics, leaving their core user base to try and implement basic workflow-supporting functionality. AsInt is renewing the focus of software on functionality and usability, committed to closing the gaps that many end users have been talking about for years. These apps

focus on resources, reduce the cost of ownership, increase availability, and ensure the safe operations of the physical asset.

About MSS:

MSS, the leading independent supplier of enterprise-wide Safety Lifecycle Management software, was named the #1 Global Supplier in the ARC Advisory Group’s 2019 Process Safety Lifecycle Management Global Market Report for its award-winning product, Safety Lifecycle Manager (SLM®). MSS’s core business is enabling their client base to digitalize the engineering disciplines of Process and Functional Safety engineering and asset integrity, leveraging the technology in its proprietary technology platform SLM. They offer a wide array of complementary solutions to support the SLM product’s implementation, hosting, and integration.

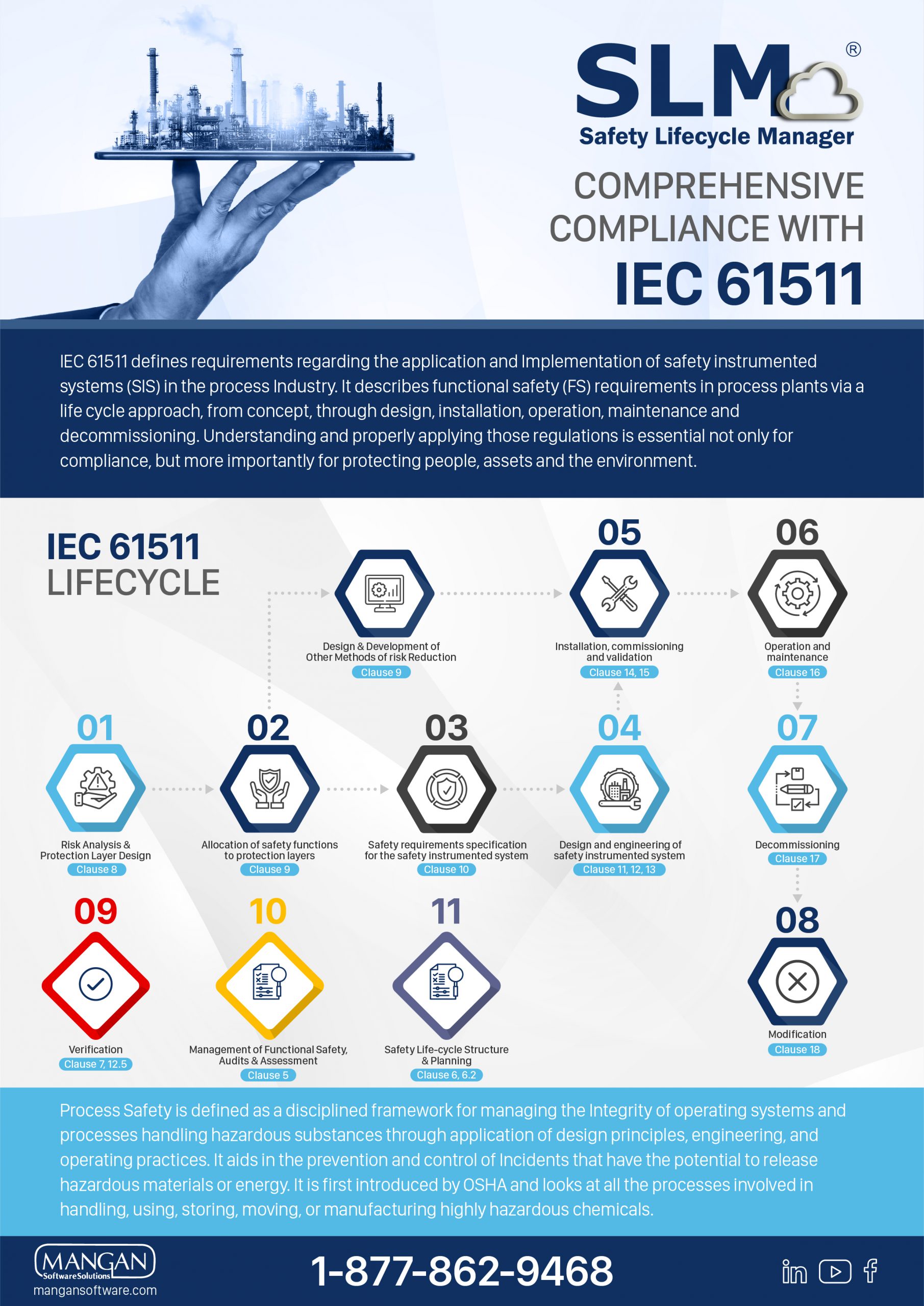

IEC 61511 defines requirements regarding the application and Implementation of safety instrumented systems (SIS) in the process Industry. It describes functional safety (FS) requirements in process plants via a life cycle approach, from concept, through design, installation, operation, maintenance and

decommissioning. Understanding and properly applying those regulations is essential not only for

compliance, but more importantly for protecting people, assets and the environment.

SLM has expanded its integration reach to include cloud based historical data integration. While SLM itself has been cloud based for some time, most data sources for plant operational data (historians) have required on-premises connectivity for access.

Please complete the form below and you will be redirected to your download.

Since the standard was first issued in 2003, PSM-covered operating sites have been working hard to get to the point where all SIS functions are kept up to date with SIL Verification, SRS per function, and the regular proof testing. Edition 2 now requires that historical performance of implemented SIS systems be fed back into PHA processes to validate assumptions which has cascading effects into SIS Design/SRS and to field instrumentation. This is a complex, multi-disciplined process to manage even if there are no major changes to an existing process between safety and operability re-validations.

Please complete the form below and you will be redirected to your download.

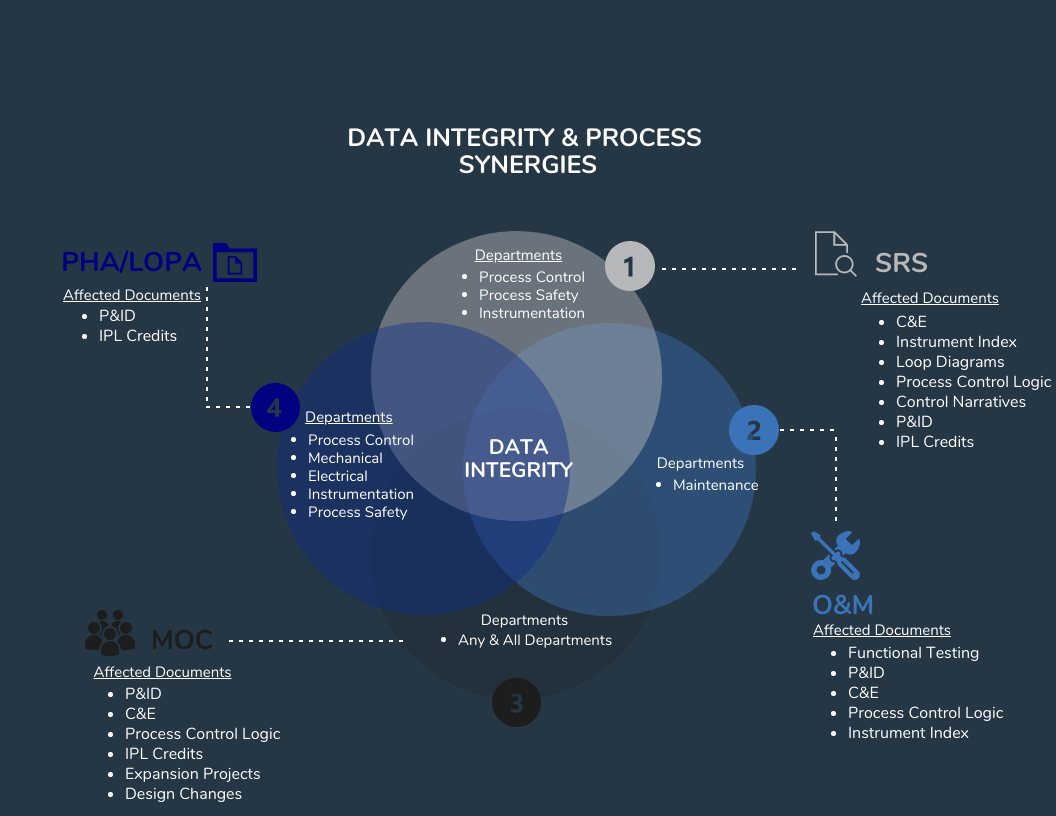

Many of us may remember playing a game as a child, commonly referred to as Telephone, where everyone would sit in a circle with the sole responsibility of passing along a message to the next player. The goal of this game was to successfully pass the original message back to the first player without any changes to the original message. If your experiences were anything like mine, you would agree that the final message rarely made it back to the first player in the same state that it left in. In some cases, the final message was so far from the original that it would induce laughter throughout the whole group. Although this game was supposed to provide laughter and enjoyment during our childhood, it was also a good teaching moment to reinforce the importance of detail and attention. This exercise is a simple demonstration of the importance of data integrity and communication and their reliance on each other.

In the human body, blood transports oxygen absorbed through your lungs to your body’s cells with assistance from your heart, while the kidneys are continuously filtering the same blood for impurities. In this example, three systems (heart, kidneys, lungs) are working together to ensure adequate maintenance of the body. Much like the human body, the process industry is complex and requires multiple systems working together simultaneously to achieve their goal. If any system were to break, it would result in reduced performance and possibly, eventual failure. These data integrity challenges are very similar, regardless of whether tasked with designing a new site or maintaining existing facilities.

Chemical plants, refineries, and other process facilities maintain multiple documents that are required to operate the facility safely. Any challenges with maintaining these documents and work processes could result in process upsets, injuries, downtime, production loss, environmental releases, lost revenue, increased overhead, and many more negative outcomes. Below are just a small example of the critical documents that must be updated to reflect actual engineering design:

|

|

|

|

|

|

|

|

There are many processes and workflows that may trigger required changes to the above documentation, such as PHAs, LOPAs, HAZOPs, MOCs, SRSs, Maintenance Events, and Action Items, to name a few. Each of these processes requires specific personnel from multiple groups to complete. As the example earlier in this blog pointed out, it can be a challenge to communicate efficiently and effectively in a small group, much less across multiple groups and organizations. Data integrity can easily be compromised by having multiple processes and multiple workgroups involved in decisions affecting multiple documents.

When starting a new project or becoming involved in a new process, it is essential to consider how the requested changes will affect other workgroups and their respective documentation. Will your change impact others? Could understanding how your changes affect other data and workgroups minimize rework or prevent incidents? Could seeing the full picture help you to make better decisions for your work process? Below are some approaches to consider to improve data integrity and communication in your workspace:

The Digital Transformation of Control and Safety Systems has come a long way. They used to be simple yet were unreliable, not very robust, or died from neglect. In the past, the term Safety System generally wasn’t used very much, rather you would see terms such as ESD and Interlock. The technologies used in the past were often process connected switches and relays that were difficult to monitor, troubleshoot, and maintain. Field instrumentation used 3-15 psig air or 4-20 ma signals. Things have changed since then. They have become more effective yet with that, a lot more complicated as well.

As control systems, safety systems, and field instrumentation were digitized, the amount of data a user has to specify and manage grew by orders of magnitude. Things that were defined by hardware design, that were generally unchangeable after components were specified, became functions of software and user configuration data which could be changed with relatively little effort. This caused the management of changes, software revisions, and configuration data to become a major part of ownership.

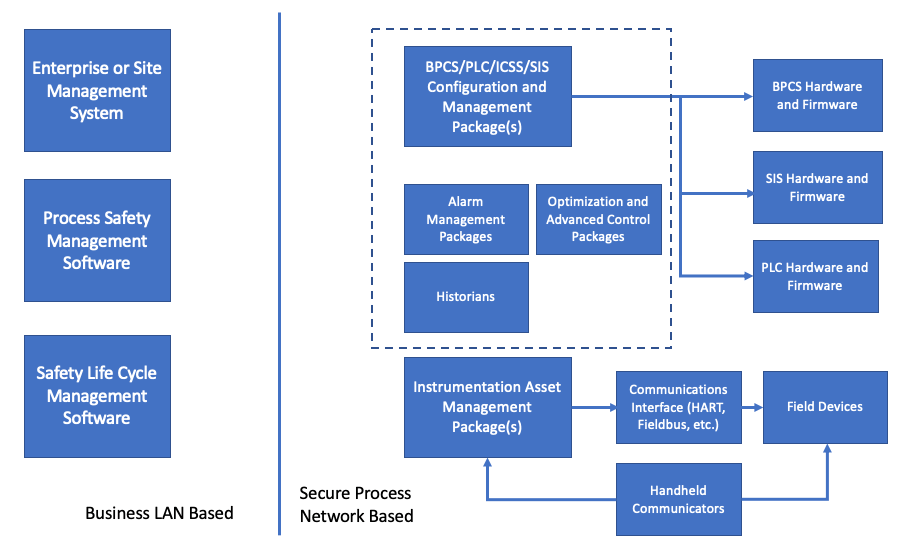

The problem is that the market is dominated by proprietary systems that apply only to manufacturers line of products, so the user is required to have multiple software packages to support the wide variety of instrumentation, control systems, safety systems and maintenance management support systems that exist in any of today’s process plants. Here’s an overview of the evolution and landscape of these systems and the relative chaos that still exists.

Field Instrumentation

Back in the early 1980’s an operating company was involved in the first round of process control system upgrades to the first generation of DCS that were available. There were projects for field testing prototypes of a new digital transmitter major manufacturers. The transmitters that were being tested were similar to the 4-20 ma transmitters, but the digital circuity that replaced the old analog circuitry was programmed by a bulky handheld communicator. It took about 10 parameters to set up the transmitter.

Now you can’t buy anything other than a digital transmitter, and instead of a few parameters available, there are dozens. Digital valve controllers have also become common and the number of parameters available number in the hundreds. Device types with digital operation have also exploded, including adoption of wireless and IOT devices. The functionality and reliability of these devices far exceed those of their prior analog circuit-based relatives. The only cost is that someone has to manage all of that data. A binder full of instrument data sheets just doesn’t work anymore.

Field Instrumentation Management Systems

When digital field instrumentation was first introduced the only means of managing configuration data for each device was through a handheld communications device, and the configuration data resided only on the device. This was simple enough when the parameters mirrored the settings on non-smart devices. However, these devices got more sophisticated and the variety of devices available grew. Management of their configuration data became more demanding and the need for tools for management of that data became fairly obvious.

The market responded with a variety of Asset Management applications and extended functionality from basic configuration date management to include calibration and testing records and device performance monitoring. The systems were great, but there was major problem in that each manufacturer had packages that were proprietary to their lines of instrumentation.

There have been attempts to standardize instrument Asset Management, such as the efforts of the FTD group, but to date most users have gravitated towards specific manufacturer software based upon their Enterprise or Site standard suppliers. This leaves a lot of holes when devices from other suppliers are used, especially niche devices or exceptionally complex instruments, such as analyzers are involved. Most users end up with one package for the bulk of their instrumentation and then a mix of other packages to address the outliers, or no management system for some devices. Unfortunately, manufacturers aren’t really interested in one standard.

Communications Systems

As digital instrumentation developed, the data available was still constrained by a single process variable transmitted over the traditional 4-20 ma circuit. The led to development of digital communications methods that would transmit considerable device operation and health data over top of, or in replacement of, the 4-20 ma PV signal. The first of these was the HART protocol developed by one manufacturer but released to the industry as an open protocol. However, other manufacturers developed their own protocols that were incompatible with HART. As with Asset Management software, the market is divided up into competing proprietary offerings and a User has to make choices on what to use.

In the 1990’s, in an attempt to standardize something, the Fieldbus Foundation was established to define interoperable protocols. Maneuvering for competitive advantage led some companies to establish their own consortiums such as Profibus and World FIP that used their own protocols. The field instrument communications world has settled on a few competing and incompatible systems. Today a user basically has to make a choice between HART, Fieldbus, Profibus and DeviceNet, and then use the appropriate, often proprietary, support software and hardware.

Distributed Control Systems and PLC’s

1980 is back when programming devices required customized hardware. The PLC had its own suitcase sized computer that could only be used for the PLC. Again, data was reasonably manageable, but a crude by today’s standards.

Over the years the power of the modules has evolved from the original designs that could handle 8 functions, period, to modules that can operate all or most of a process plant. The industry came up with a new term, ICSS for Integrated. Control and Safety System to describe DCS’s that had been expanded to include PLC functions as well as Safety Instrumented Systems.

The data involved in these systems has likewise exploded as has the tools and procedures for managing that data. The manufacturers of the DCS, PLC and SIS systems have entire sub-businesses devoted to the management of the data associated with their systems.

As with other systems software the available applications are usually proprietary to specific manufacturers. Packages that started out as simpler (relatively speaking) configuration management software were extended to include additional functions such as alarm management, loop turning and optimization, and varying degrees of integration with field device Asset Management Systems.

Safety Instrumented Systems

Safety Instrumented System logic solvers were introduced in the earl 1980’s, first as rather expensive and difficult to own stand-alone systems. The SIS’s evolved and became more economic. While there still are stand along SIS available, some of the DCS manufacturers have moved to offering Integrated Control and Safety Systems (ICSS) in which SIS hardware and software for Basic Process Control (BPCS), SIS and higher-level functions such as Historians and Advanced Control applications are offered within integrated product lines.

As with all of the other aspects of support software, the packages available for configuration and data management for SIS hardware and software is proprietary to the SIS manufacturers.

Operation and Maintenance Systems

The generalized Operation and Maintenance Systems that most organizations use to manage their maintenance organizations exist and have been well developed for what they do. Typically, these packages are focused on management of work orders, labor and warehouse inventory management and aren’t at all suitable for management of control and safety systems.

Most of the currently available packages started out as offerings by smaller companies but have gotten sucked up into large corporations that have focused on extending of what were plant level applications into full Enterprise Management Systems that keep the accountants and bean counters happy, but make life miserable for the line operations, maintenance and engineering personnel. I recall attending an advanced control conference in which Tom Peters (In Search of Excellence) was the keynote speaker. He had a sub-text in his presentation that he hated EMS, especially SAP. His mantra was “SAP is for saps”, which was received by much head nodding in the audience of practicing engineers.

Some of the Operations and Maintenance Systems have attempted to add bolt on functionality, but in my view, they are all failures. As described above, the management tools for control and safety systems are fragmented and proprietary and attempting to integrate them into generalized Operation and Maintenance Systems just doesn’t work. These systems are best left to the money guys who don’t really care about control and safety systems (except when they don’t work).

Process Safety System Data and Documentation

The support and management software for SIS’s address only the nuts and bolts about programming and maintaining SIS hardware. They have no, or highly limited functionality for managing the overall Safety Life Cycle from initial hazard identification through testing and maintaining of protective functions such as SIFs and other Independent Protection Layers (IPLs). Some of the Operation and Maintenance System suppliers have attempted to bolt on some version of Process Safety Management functionality, but I have yet to see one that was any good. In the last decade a few engineering organizations have released various versions of software that integrate the overall Safety Lifecycle phases. The approach and quality of these packages varies. I’m biased and think that Mangan Software Solutions’ SLM package is the best of the available selections. However, The ARC Advisory Group also agrees.

Conclusions

The Digital Transformation of Control and Safety Systems has resulted in far more powerful and reliable systems than their analog and discrete component predecessors. However, the software required to support and manage these systems is balkanized mixed of separate, proprietary and incompatible software packages, each of which has a narrow scope of functionality. A typical plant user is forced to support multiple packages based upon the control and safety systems that are installed in their facilities. The selection of those systems needs to consider the support requirements for those systems, and once selected it is extremely difficult to consider alternatives as it usually requires a complete set of parallel support software which will carry its own set of plant support requirements. Typically, a facility will require a variety of applications which include:

So choose wisely.

Rick Stanley has over 40 years’ experience in Process Control Systems and Process Safety Systems with 32 years spent at ARCO and BP in execution of major projects, corporate standards and plant operation and maintenance. Since retiring from BP in 2011, Rick has consulted with Mangan Software Solutions (MSS) on the development and use of MSS’s SLM Safety Lifecycle Management software and has performed numerous Functional Safety Assessments for both existing and new SISs.

Rick has a BS in Chemical Engineering from the University of California, Santa Barbara and is a registered Professional Control Systems Engineer in California and Colorado. Rick has served as a member and chairman of both the API Subcommittee for Pressure Relieving Systems and the API Subcommittee for Instrumentation and Control Systems.

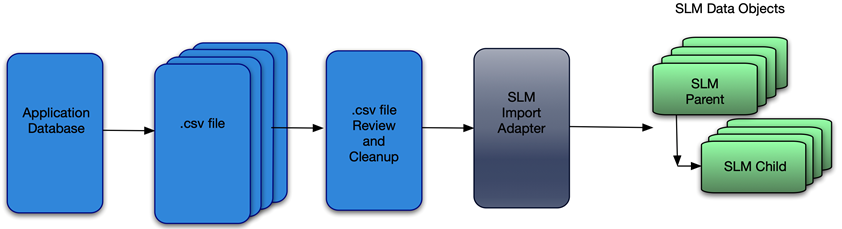

Once the user’s data has been exported to the intermediate .csv file a data quality review and clean up step is advisable. Depending upon the data source, there are likely to be many internal inconsistencies that are much easier to correct prior to import. These may be things as simple as spelling errors, completely wrong data, or even inconsistent data stored in the source application. I recall a colleague noting after a mass import from a legacy database to a Smart Plant Instrument database – “I didn’t realize how many ways there were to incorrectly spell Fisher.”

Once the data has been imported, correcting such things can be very tedious unless you are able to get into the database itself. For most users, errors such as this get corrected one object at a time. However, editing these types of problems out of the .csv file is pretty quick and simple as compared to post import clean up.

To Import the data, the User goes to the Adapter Module and choses the desired Import Adapter and identifies the .csv file that contains the data. The SLM® solution does the rest.

It should also be noted that SLM® software is capable of exporting data too. The User selects data types to export along with the scope (e.g. a Site or Unit). The exported data is in the form of a .csv file. This can be used to import data into a 3rd party application, or to use a data template to import more data.

Rick Stanley has over 40 years’ experience in Process Control Systems and Process Safety Systems with 32 years spent at ARCO and BP in execution of major projects, corporate standards and plant operation and maintenance. Since retiring from BP in 2011, Rick has consulted with Mangan Software Solutions (MSS) on the development and use of MSS’s SLM Safety Lifecycle Management software and has performed numerous Functional Safety Assessments for both existing and new SISs.

Rick has a BS in Chemical Engineering from the University of California, Santa Barbara and is a registered Professional Control Systems Engineer in California and Colorado. Rick has served as a member and chairman of both the API Subcommittee for Pressure Relieving Systems and the API Subcommittee on Instrumentation and Control Systems